Artificial intelligence is transforming nearly every profession, criminal defense is quietly undergoing its own technological reckoning. One of the attorneys exploring that is Brett M. Rosen, a New Jersey-based criminal defense lawyer and partner at Proetta, Oliver, Fay & Rosen, who is using emerging AI tools to rethink how defense teams prepare for court.

Rosen’s practice focuses on violent crimes, domestic violence, drug offenses, and sex crimes—areas where evidence often turns on fast-moving facts and complex discovery. While many lawyers still rely on traditional document review, Rosen says large-language-model technology now allows him to “ask hypothetical questions, test legal theories, and find inconsistencies in police reports” long before trial.

“The point isn’t to let the machine decide a case,” Rosen explained. “It’s to pressure-test the human reasoning behind every witness statement and every piece of evidence.”

That philosophy recently made its way into the courtroom. In a recent New Jersey trial, Rosen used AI tools to help structure cross-examination and prepare his closing argument, feeding the system anonymized discovery data to look for contradictions in officer narratives and physical evidence. He says the software helped identify subtle inconsistencies that influenced the case’s key themes and improved his preparation.

“It didn’t replace my judgment,” Rosen said. “But it made me a sharper cross-examiner. The program caught small gaps that humans might miss at first glance.”

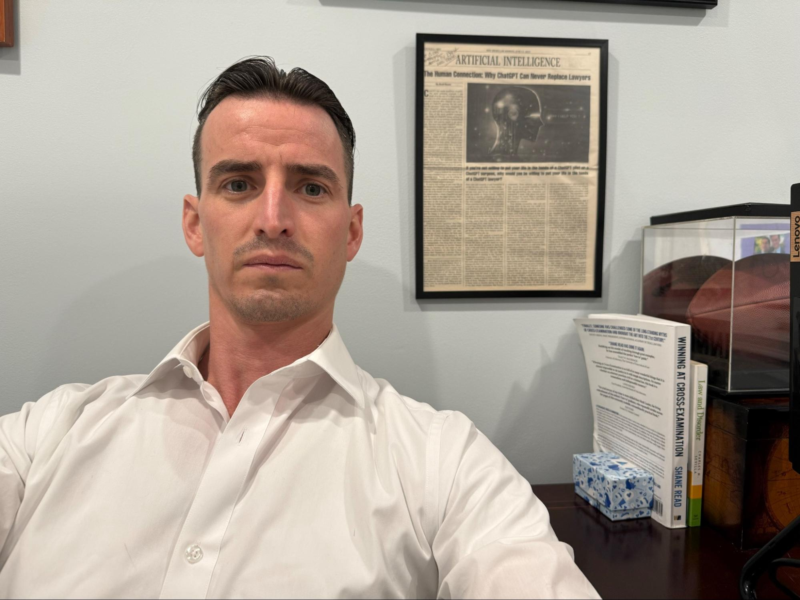

Rosen’s interest in balancing technology and human advocacy predates the current AI wave. In June 2023, he authored a commentary in the New Jersey Law Journal titled “The Human Connection: Why ChatGPT Can Never Replace Lawyers.” In it, he argued that while AI can assist lawyers in research and efficiency, it cannot replicate the empathy and emotion that come with jury trials. Comparing AI to autopilot in aviation or robotic assistance in surgery, Rosen wrote that “there’s something about having an artificial intelligence machine flying a plane without the backup or support of a human pilot,” warning that the same logic applies in law.

Later interviews in Law & Crime and Lawyer Herald continued the theme, with Rosen emphasizing that AI should be treated as a “transparency tool” rather than a substitute for human reasoning.

Across the country, legal scholars in California are studying similar issues. The Criminal Law & Justice Center at Berkeley Law recently launched an initiative on “AI for Public Defenders,” examining how artificial intelligence can assist with discovery and evidence analysis in state courts. Meanwhile, a California Court of Appeal opinion highlighted by the National Law Review cautioned attorneys about the ethical use of AI-generated content in filings—showing that the state’s legal community is already grappling with both the promise and pitfalls of these technologies.

Rosen’s early experience as an extern at the Bristol County District Attorney’s Office in Massachusetts, where he conducted a jury trial, helped shape his courtroom approach. “That externship taught me to think fast and stay grounded in the facts,” he said. “Now, technology helps me extend that discipline—checking every angle, every timeline, and every assumption before I step into court.”

As courts nationwide debate privacy, bias, and transparency in algorithmic systems, Rosen argues that lawyers must lead the conversation. “The justice system should never be automated,” he said. “But it should be informed by every tool that helps reveal the truth.”

DISCLAIMER: No part of the article was written by The Signal editorial staff.